Cyber Threat Intelligence - Art, Science, something else entirely?

Is Cyber Threat Intelligence an art, science, both, or something else entirely?

Initially, when I first started out becoming interested and working in Cyber Threat Intelligence I never gave the actual analytical part of intelligence much thought.

As everyone who has been in this area for a while can probably still remember, hoarding indicators of compromise is a fun activity and can be incredibly enticing when you have not yet a real clue of what you are doing. It is, at least initially, certainly more interesting than diving straight into anthropological, psychological, sociological, or analytical textbooks.

However, as I became more experienced in and, more importantly, more fascinated by the analytical work that makes intelligence .. well, intelligence .. I began to notice that it's not really clear what "type" of activity intelligence analysis is.

To better explain what I mean, let's look at mathematics for example. That's clearly a discipline that's based on or characterized by the methods and principles of science. Sure, there are borderline artistic things you can do with mathematics, and mathematics is a vital part of a lot of art, but at the end of the day it's overwhelmingly science.

Similarly, to use an example that's even closer to technology, programming has an artistic aspect to it. But it's guided by methodical rules. A programmer might be rather liberal and creative in how they interact with a programming language within the confines of the language itself, but ultimately it's still closer to science than it is to art.

Yet if you would have asked me how I would classify intelligence analysis, if I would treat it as art or science (or something different entirely), I could not have given you a straight answer. And when someone, in a SANS-course advert, did give a straight answer, it bothered me more than it should have:

Threat intelligence analysis has been an art for too long, now it can finally become a science at SANS. Mike Cloppert and Robert M. Lee are the industry 'greybeards' who have seen it all. They are the thought leaders who should be shaping practitioners for years to come.

So I decided to spend some time utilizing my lack of self-control, reasonable research skills, and Internet access to learn more, in order to try to come to a conclusion myself. That one slow afternoon quickly became a rabbit hole that occupied me, on and off, for the past several months, as I read more and more about the history and evolution of intelligence analysis.

In the process of acquiring and devouring a number of mostly way-too-expensive-books I got to know that, as it turns out, there's an ongoing, decades-long debate over whether intelligence analysis is an art or science. It began as a genuine disagreement between those who championed intuitive expertise and those who demanded systemic rigor, but has since converged on a more sophisticated understanding, into a discussion that has so far not been fully resolved.

And while it might look like a semantic dispute, it very much is not. It reflects a, for lack of a better word, deeper struggle over what analysts do, how they do it, how they should be trained, and how much confidence decision-makers can and should place in their judgments.

Reading about this discussion, and reading about the history of its progress and development has taught me a lot of things. In this post I want to talk about it at reasonable length. Well, somewhat reasonable length that is.

Before I begin, a few words about this post. It is the culmination of several months of on-and-off research on the subject of intelligence analysis, particularly in the context of cyber threat intelligence. This research builds on a foundation of professional experience as a security analyst for a non-state cybersecurity organization. I have no accepted / recognized formal training as intelligence analyst as well as no academic experience.

Hence I am approaching this solely as a practitioner in the private sector, with all the limitations and caveats this brings when dealing with a subject matter that has been traditionally the domain of, mostly, state organizations or state actors. Please keep that in mind while reading.

Additionally I would extend particular thanks to some of the fine folks at Curated Intelligence for their encouragement. This post would not have made it to the finishing line without these fine folks. You know who you are. I owe you 🍻.

Starting from the (traditional) bottom ..

When I was a teenager I stumbled upon a copy of "Strategic Intelligence for American World Policy" by Sherman Kent at a local flea market. Because I was already fascinated by intelligence work back then I bought it immediately and read it in almost a single sitting. Needless to say I understood next to nothing of the book, both because of my limited English, and because this wasn't really the type of book for a teenager whose only exposure to working with intelligence was a mission in Call of Duty.

But when I re-read it a decade later it was an enlightening book, and I understood why a lot of people treat it as a kind of "founding text" of (American) intelligence analysis as a self-conscious discipline. The author joined the Office of Strategic Services' Research and Analysis Branch in 1941, wrote this book in 1949, and headed the CIA's Office of National Estimates from 1952 to 1967.

His vision of intelligence work, which was ultimately institutionally embedded in 2000 by the founding of the Sherman Kent School for Intelligence Analysis, was unapologetically scholarly, and he tried to model analytic processes explicitly on the social sciences. He laid out a seven-step process (problem identification, relevance analysis, data collection, critical evaluation, hypothesis generation, testing, presentation of the best-supported hypothesis) that maps almost cleanly to modern analytic processes (or, as a scholar called it in a paper I read during the research for this post, the "modern hypothetico-deductive ideal", which sounds so unnecessarily preposterous and performative that I just had to mention it here).

Yet while Kent clearly saw intelligence analysis as scientific, he wasn't naive about the limits of "scientism", especially later in his career. In his 1964 essay "Words of Estimative Probability" (declassified in 2012 and surprisingly readable) he attempted to systematize the language of uncertainty itself, something that would only be fully institutionalized some four decades later (and something that CTI still struggles with, but more on that later).

If Kent anchored intelligence analysis in the scientific camp, in scholarly method, Richards Heuer relocated it in cognitive psychology and, at least to some extent in the art camp, art that - in the form of the human brain - needed to be "disciplined" into working more scientifically. His "Psychology of Intelligence Analysis", originally a series of internal CIA articles drafted in the late 1970s and 1980s and republished as a book in 1999, advanced three interlocking claims:

- That the human mind is "poorly wired" to handle the inherent and induced uncertainties of intelligence work.

- That mere awareness of cognitive bias does not, by itself, mitigate it.

- That tools and techniques that gear the analyst's mind to apply higher levels of critical thinking can substantially improve analysis.

His catalogue of biases (confirmation, anchoring, availability, mirror-imaging, vividness, over-sensitivity to consistency, hindsight) became canonical, and his "Analysis of Competing Hypotheses" (ACH) procedure became the most frequently invoked exemplar of structured analytic technique.

Interestingly enough, while the work Heuer did indeed relocated intelligence analysis towards the "art camp", he himself rejected the art-science dichotomy as fruitless. His project, according to himself, was less to convert intelligence analysis into science than to discipline the mind by externalizing reasoning, so that internal thought processes are systematic and transparent, so that they can be shared, built on, and easily critiqued.

Whatever the aspirations of Kent and Heuer were, a 2005 analysis of the analytic culture in the United States Intelligence Community argued that there wasn't even anything that resembled a scientific method employed by the intelligence community when it comes to practicing intelligence analysis. Drawing on hundreds of interviews and direct observation across different analytic units, the author of the analysis Rob Johnston, found "no baseline standard analytic method".

According to him, the dominant practice was "limited brainstorming on the basis of previous analysis, thus producing a bias toward confirming earlier views". He also pointed out that training was idiosyncratic and largely on-the-job. His was, in effect, a friendly but devastating examination that proved that whatever the intelligence community said about scientific method, what it actually did was a craft - learned by apprenticeship, varying widely across stovepipes, and only loosely connected to the formal procedures people like Kent and Heuer had advocated for.

I have to admit that at this point in my research I was, for the first time, close to just giving up. Because it looked like that the answer to my initial question - if intelligence analysis was an art or a science - was something entirely different, something along the lines of "well, like .. neither to be honest".

Luckily for me, this was when I stumbled upon "Improving Intelligence Analysis - Bridging the Gap between Scholarship and Practice", in which the author argues that intelligence analysis is best understood as a "profession-in-formation", possessing characteristics of both art and science - something oscillating between artistic and scientific self-understanding. The author of this book, Stephen Marrin, also wrote an article in the International Journal for Intelligence and Counterintelligence, directly addressing "my" question. In it, his resolution was not to declare a winner but to map analysts along a continuum of structure versus intuition and to recommend mixed analytic teams that capture both modes.

And interestingly enough, even though both the book and the article have been released fifteen years ago, the conclusions in it are more or less accepted truth to this day. There is still ongoing discussion about a wide variety of aspects of intelligence analysis, with literature continuing to be released - such as "Intelligence: From Secrets to Policy" by Mark Lowenthal (which is more on the "art" side of things") or the continuously updated Oxford Handbook on Intelligence Studies.

Yet there seems to be, at least from an outside, some form of consent about the reality that "traditional" intelligence analysis is neither art nor science, but a bit of both and also something else entirely. Which begs the question (as in "begs-the-question-as-a-stilistic-instrument-to-lead-the-reader-to-the-next-part-of-this-blogpost, not actually begging the question) - what about intelligence analysis in the context of Cyber Threat Intelligence?

.. now we're (at the modern) here!

When looking for a clear definition for "Cyber Threat Intelligence" I found this one mentioned multiple times, although the original source is only accessible through the Internet Wayback Machine at the time of writing this post:

Threat intelligence is evidence-based knowledge, including context, mechanisms, indicators, implications and actionable advice, about an existing or emerging menace or hazard to assets that can be used to inform decisions regarding the subject's response to that menace or hazard.

NIST SP 800-150 defines Cyber Threat Information as follows:

Cyber threat information is any information that can help an organization identify, assess, monitor, and respond to cyber threats. Cyber threat information includes indicators of compromise; tactics, techniques, and procedures used by threat actors; suggested actions to detect, contain, or prevent attacks; and the findings from the analyses of incidents. Organizations that share cyber threat information can improve their own security postures as well as those of other organizations.

Ultimately I'd argue that Cyber Threat Intelligence is, as a product, rather similar to more "traditional", "analogue" intelligence products. What distinguishes CTI from its "parent discipline" is the operating environment rather than methods that lead to it. CTI deals with threat actors who "innovate at the speed of code rather than of policy" (I'm still mad that someone else came up with this excellent quote).

CTI involves data that is voluminous, often machine-generated, and incomplete in sometimes annoyingly vendor-specific ways. Deception can be easily engineered into those technical artifacts, which makes the often automated decisions made based on CTI challenging. Interestingly enough, the human factor is - at least on an operational level - not as large or as significant as with traditional intelligence, because of the emphasis on machines and data. At least on the side of defense, on offense we have to deal with creative adversaries and the ever shifting ground of the threat landscape. So .. science versus art all over again?

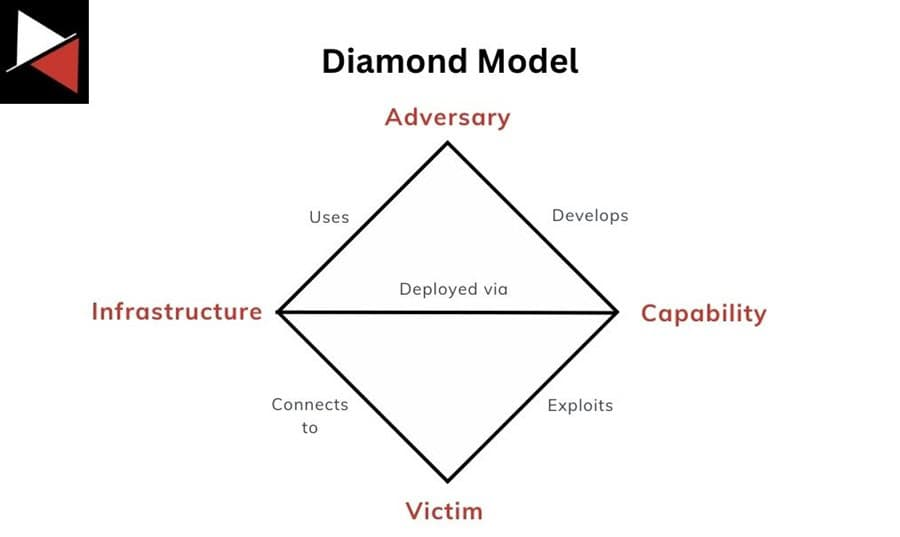

The strongest evidence for CTI as a scientific discipline lies in its formal frameworks. The Diamond Model, for example, is explicitly framed by its authors as the application of scientific principles (measurement, testability, and repeatability) to intrusion analysis. The model defines the basic atomic element of any intrusion event as a four-feature tuple (adversary, capability, infrastructure, victim) and builds up a formal axiomatic system, with the foundational claim being that for every intrusion event there exists an adversary taking a step towards an intended goal by using a capability over infrastructure against a victim to produce a result.

Because of these basic conditions the model supports analytic pivoting along edges between features, the assignment of confidence values, and the formal grouping of events into activity threads / campaigns.

Other well-known frameworks similarly utilize scientific principles - the Cyber Kill Chain, for example, formalizes intrusion as a seven-phase chain (reconnaissance, weaponization, delivery, exploitation, installation, command and control, actions ob objectives) paired with courses of recommended actions (detect, deny, disrupt, degrade, deceive, destroy). Or F3EAD (Find, Fix, Finish, Exploit, Analyze, Disseminate), which is a framework drawn from U.S. Special Operations Forces and adapted for incident response. It fuses operations and intelligence into an iterative learning loop in which each (defensive) operation generates data refining the next hypothesis.

There are others as well, such as the Pyramid of Pain, PEAK framework, MITRE ATT&CK, .. the point I'm trying to make is that taken together, these frameworks instantiate a remarkable amount of the apparatus traditionally associated with scientific maturation

Merely taking these into account indicate that CTI is materially closer to the "scientific pole" than traditional (all-source) intelligence has yet managed to come. If it weren't for ..

Note: I have been thinking long and hard if I want to talk about the role of data science, machine learning, artificial intelligence and the likes and their impact on intelligence analysis in the context of CTI. Ultimately I have decided against it, for two main reasons. One being, in all honesty, that it's not my forte. I simply lack the prerequisite knowledge and understanding to make an educated guess there. The other being that we're seeing such large, fast developments in the area of local language models and other areas of artificial intelligence at the moment that any statement I make now might be fully outdated in a couple of weeks from now. So there's indeed a chance that all these things will pull CTI more strongly towards "science", but .. we simply don't know yet and I'm not comfortable enough in myself to speculate about that in this post.

Artribution Attribution (and more)

Attribution is the biggest "artistic stronghold" in CTI. There's no methodology, no flow-chart, no recipe for correct attribution. Any attempt at a linear representation would not be able to reflect the uniqueness and varied flow of the wide range of different cases and / or scenarios. It's a process, and a nuanced one one at that.

The most obvious case against automated attribution, solely based on technical indicators, would be one like the 2018 use of Olympic Destroyer against the Pyeongchang Winter Olympics. Instead of simply trying to cover their tracks, the attackers had built-in tracks pointing in several directions at once - by embedding forensic artifacts that pointed toward North Korean, Chinese, and Russian threat actors all at once. Only manual verification by security researchers uncovered the sophisticated false flags. Computers are good at detecting computers, I'm not going to argue against that. But where adversaries are humans innovating on novel TTPs, "science" systematically lags.

Similarly, generation of hypothesis resists full automation. Instead of trying to come up with my own paragraph, I'll blatantly steal from "Generating Hypotheses for Successful Threat Hunting" by Robert M. Lee and David Bianco. In their paper the authors argue, that threat hunting is a proactive and iterative approach to detecting threats. Although threat hunters should rely heavily on automation and machine assistance, the process itself "cannot be fully automated".

An analyst's ability to formulate a hunt hypothesis "is derived from observations. An observation could be as simple as noticing a particular event that 'just doesn't seem right' or something more complicated, such as supposition about ongoing threat actor activity in the environment based on a combination of past experience with the actor and external threat intelligence".

Obviously, the previously discussed catalogue of human biases reappears with CTI as well. The biases of particular concern in CTI - mirror-imaging an adversary's decision logic onto one's own, confirmation bias in clustering of indicators, anchoring on initial hypothesis when it comes to attribution, vividness bias toward technically spectacular intrusions over quieter but more strategic ones are exactly the same as the ones Heuer writes about, merely with some "cyber" sprinkled on top.

But, at the end of the day, I think the most consequential and thus most important question for the debate this post talks about is reproducibility. The evidence I was able to find is scant, and not particularly encouraging. In a paper released in 2020, scientists compared the indicators provided by two major commercial CTI vendors and found that the two providers cover very different indicators, even as they pertain to the same threat actors, suggesting a low level of coverage of their overall operations. Similarly, Mandiant writes in one of their blog posts:

Conversely, sloppy clustering between threat actors tracked by different entities can quickly lead to confusion and imprecision. For example, the threat actor dubbed “Winnti” became increasingly vacuous due to inconsistent methodologies, dubious clustering, and a lack of collaboration between different security organizations.

This is, a term I learned while reading up in preparation for writing this post, a so-called inter-rater reliability problem. As a publication by the private sharing group Curated Intelligence put it:

Every organization has their own telemetry, data, standards, procedures, and confidence levels. Unless specified publicly, there is no reason to believe any two organizations have the same visibility and standards.

Not quite here and not quite there .. again

There are indicators that CTI is more science than art, there are indicators that CTI is more art than science, nothing really conclusive. But there are differences in the inconclusiveness compared to traditional all-source intelligence analysis.

CTI is materially closer to a scientific discipline than traditional intelligence analysis. Where traditional intelligence lacks a baseline standard and analytic method, CTI has a thicker substrate of formal frameworks, machine-readable ontologies, hypothesis-driven workflows and other such things. Processing of indicators, telemetry enrichment, behavioral clustering, (partially) adversary emulation are largely scientific in temperament. It's about automation and replication. However, CTI nevertheless retains, and perhaps intensifies, the artistic core of intelligence analysis.

Interference of adversary-intent, discovery of new TTPs, attribution (under the technical deception that analysts might be confronted with) and strategic-level forecasting are largely artistic. They depend on judgment, non-technical knowledge, imagination, and some sort of recognition of outliers and patterns. And the reproducibility problem that I talked about above clearly reveals that competent analysis working from "competent" data routinely reach different conclusions about the same threat actor.

The most defensible reading of CTI is therefore the same as the most defensible reading of traditional intelligence - a hybrid profession-in-formation, in which structured methodology and expert judgment are not competitors but complements.

But, on top of that, I don't think that there is, at least for now, a terminal answer. And after all this reading and writing, I think there perhaps ought not to be one.